Videomark: A distortion-free robust watermarking framework for video diffusion models

Videomark: A distortion-free robust watermarking framework for video diffusion models

Videomark: A distortion-free robust watermarking framework for video diffusion modelsAbstract

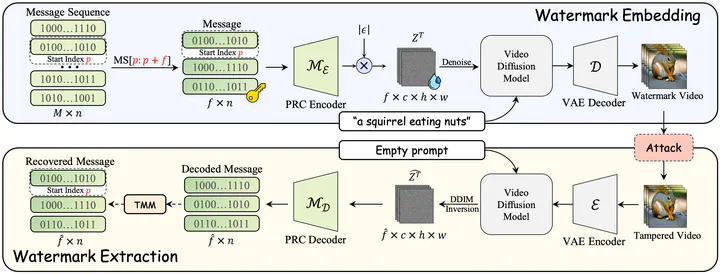

This work introduces VideoMark, a distortion-free robust watermarking framework for video diffusion models. As diffusion models excel in generating realistic videos, reliable content attribution is increasingly critical. However, existing video watermarking methods often introduce distortion by altering the initial distribution of diffusion variables and are vulnerable to temporal attacks, such as frame deletion, due to variable video lengths. VideoMark addresses these challenges by employing a pure pseudorandom initialization to embed watermarks, avoiding distortion while ensuring uniform noise distribution in the latent space to preserve generation quality. To enhance robustness, we adopt a frame-wise watermarking strategy with pseudorandom error correction (PRC) codes, using a fixed watermark sequence with randomly selected starting indices for each video. For watermark extraction, we propose a Temporal Matching Module (TMM) that leverages edit distance to align decoded messages with the original watermark sequence, ensuring resilience against temporal attacks. Experimental results show that VideoMark achieves higher decoding accuracy than existing methods while maintaining video quality comparable to watermark-free generation. The watermark remains imperceptible to attackers without the secret key, offering superior invisibility compared to other frameworks. VideoMark provides a practical, training-free solution for content attribution in diffusion-based video generation. Code and data are available at https://github.com/KYRIE-LI11/VideoMark.

Type

Publication

In arXiv preprint

Abstract

we propose VideoMark, a distortion-free robust watermarking framework for video diffusion models.